Two ways to build an AI voicebot: stitching versus realtime

How an AI voicebot processes speech determines whether it sounds natural or choppy and unreliable. Right now two architectures are in use among builders, and the choice between them affects the caller experience, system reliability, and what the bot can learn from the conversation. The older approach chains three separate components together, while the newer method processes the entire conversation at once.

The classic approach: stitching

When the first voicebots were built, it made sense to connect three existing components. Incoming speech went through a speech-to-text engine that turned it into text, then a language model read that text and formulated an answer, and finally a text-to-speech engine converted the answer back into audible speech. This architecture is called “stitching” in the industry, because you chain three independent systems into one pipeline.

For a time that delivered usable results, and for teams unwilling to train their own speech model it was the only practical route. In practice, however, three vulnerabilities emerge, because each link in the chain can fail independently. Speech recognition might misunderstand a sentence, the language model might give a slow or incorrect answer, and voice synthesis might fail at an awkward moment. Many teams therefore build in a backup with an alternative TTS or LLM provider so the bot keeps working during an outage. That solves the downtime problem, but callers suddenly hear a completely different voice and become confused about who they are actually talking to.

The second drawback may carry even more weight. With stitching, the language model sees only a text transcription, so it cannot perceive the tone, volume, hesitation, and emotion of the caller. An irritated customer and a satisfied customer sound identical to the model once their words are written down, and that undermines the emotional sensitivity that makes a conversation valuable. Signals about likely age, native language, or mood get lost in the translation to text, yet those signals often determine how an agent would conduct the conversation.

The new approach: a single realtime speech model

Since OpenAI released gpt-realtime-1.5 on February 24, 2026, there is a second way to build voicebots that works better in most cases. Instead of three separate components in sequence, one model listens and speaks directly, eliminating the entire transcription and synthesis layer. The model understands the words, tone, and emotion of the caller simultaneously, so it can respond to those directly. A demo by Charlierguo shows how smoothly that works in practice.

That delivers concrete benefits in everyday use. There is only one point where something can fail instead of three, so the risk of outage drops significantly. Response time typically stays under 400 milliseconds, so the conversation flows naturally without the latency that occurs with stitching. Multilingual support is built in, so the same model seamlessly switches between Spanish, English, Portuguese, and other languages without you configuring that switch beforehand. And because the model processes audio instead of text, it recognizes an irritated customer by their voice and can transfer them directly to an agent without requiring a keyword or explicit escalation.

When stitching is still the right choice

There remains a niche where the older architecture fits better, and that is situations where no live conversation needs to happen but instead a recording will be analyzed afterward. When a contact center wants to have conversations summarized, coded, or screened for compliance after the call, there is no latency requirement and you can safely choose a specialized language model. Think of a medical language model that recognizes healthcare abbreviations and terminology, or a speech-to-text engine specifically trained on a regional dialect. Precision on that single component outweighs the overall conversation experience in those scenarios, because no caller is waiting on the line for an answer.

Our recommendation

For companies that want to have live conversations handled by a voicebot, we recommend the realtime approach in almost all cases. The combination of faster response, reduced outage risk, multilingual support without configuration, and emotional awareness produces a caller experience that does not feel robotic. For post-call analysis and other scenarios where precision on a specific component is decisive, we continue to deploy stitching architectures, because they still deliver the strongest results there.

Our team builds in both architectures

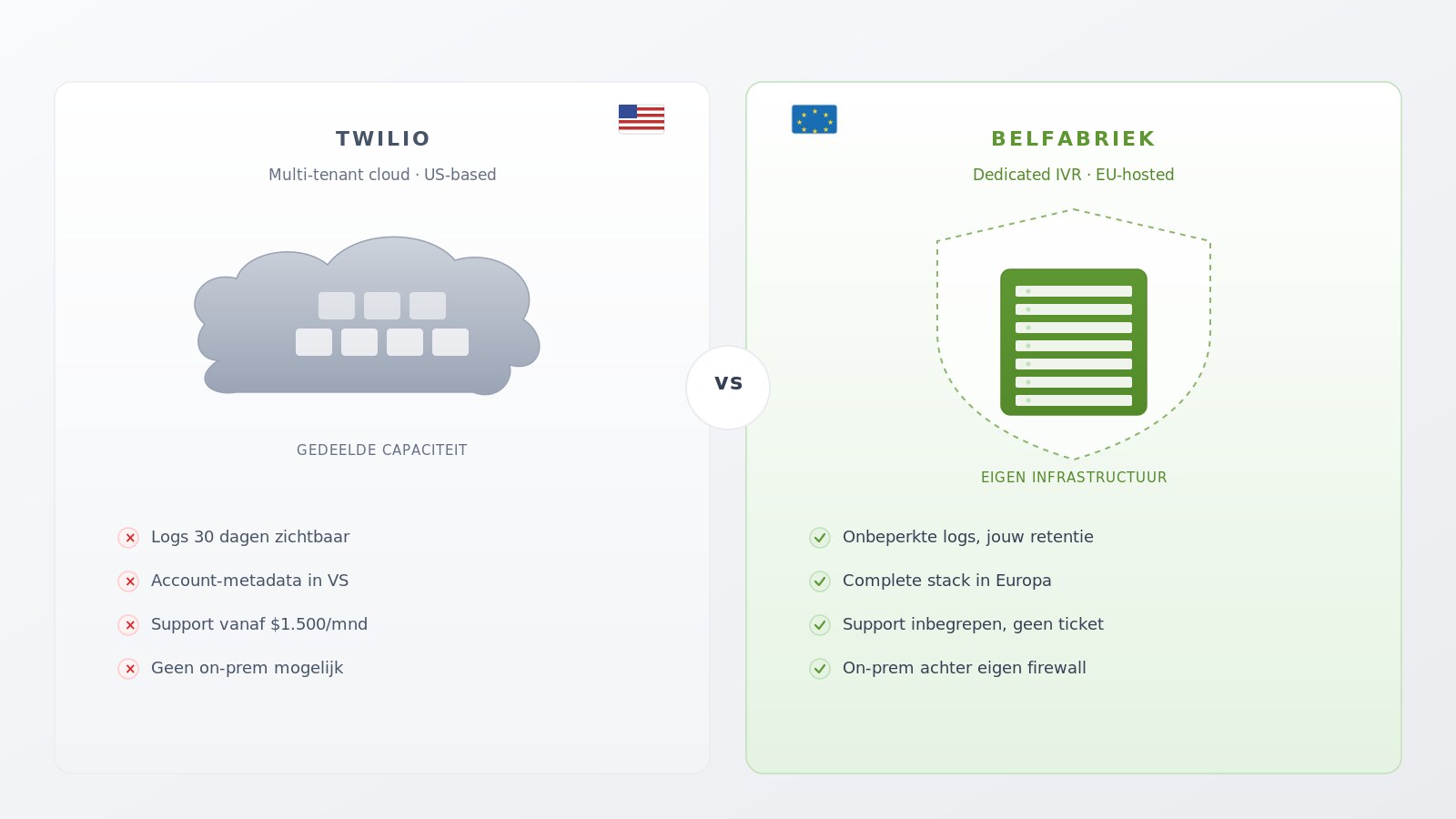

CallFactory builds voicebots in both architectures, depending on what fits best with your call flow. Whether you want a fully managed solution where our team sets everything up from start to finish, or prefer a dedicated IVR on your own infrastructure, we deliver compliant implementations that are available 24 hours a day, seven days a week.

For a practical use case, see how an AI receptionist provides 24/7 availability without extra staff.

Contact our team to discuss which architecture matches your conversations, how the integration with your existing systems works, and when the voicebot can go live. That way you get a clear forecast of timeline and investment, and from day one you can have a voicebot handling both incoming and outgoing calls that listens and speaks at a level that was unthinkable until recently.

Frequently Asked Questions

Stitching is valuable when you don’t need a live conversation but instead want to analyze a recording afterward. You then have the freedom to select a specialized language model, such as a medical model for healthcare terminology or a speech-to-text engine trained on a regional dialect. In those cases, precision on one component outweighs the importance of a smooth conversation experience.

Response time typically stays under 400 milliseconds, comparable to a normal phone conversation between two people. Because no separate components run in sequence, the latency that occurs with stitching disappears entirely, so callers rarely realize they are speaking with an AI.

Yes. Realtime speech models are trained multilingually, so they can switch between Spanish, English, Portuguese, and other languages during the same conversation without you setting up that switch in advance. For companies serving international customers, an entire configuration step falls away.

We build a fallback route into each project, so the conversation automatically transfers to an agent or a recorded message if the model fails. The caller only notices the transfer, so your call flow stays intact even if there is a disruption on the vendor side.

Yes. We build the voicebot so that audio and metadata stay within secure infrastructure and so that data processing agreements are in place with all parties involved. For regulated industries such as healthcare, banking, and insurance, we also supply a self-hosted variant that runs entirely behind your own firewall.